Hans-Peter Brückner1, Gerd Schmitz2, Daniel Scholz3, Alfred Effenberg2*, Eckart Altenmüller3*, Holger Blume1*

1 Institute for Microelectronic Systems, Leibniz Universität Hannover

2 Institute for Sport Science, Leibniz Universität Hannover

3 Institute for Musikphysiologie und Musikermedizin, Hochschule für Musik, Theater und Medien, Hannover

* Corresponding authors

Introduction

After a stroke patients often suffer from dramatically constrained mobility and partial paralysis of the limbs. Especially movements of the upper extremities, like grasping movements, are frequently hampered. Grasping movements of stroke patients are typically characterized by reduced accuracy and speed, and high variability. In common rehabilitative approaches, an improvement is achieved by massed practice of a given motor task supervised by a therapist.

In this project, complex sonification of arm movements is used in order to generate a movement-based auditory feedback. Sonification in general is the displaying of non-speech information through audio signals [1]. Here, we use sonification to acoustically enhance the patient’s perception and to support motor processes in terms of a smooth target-orientated movement without dysfunctional motions. Stroke rehabilitation mainly focuses on grasping tasks, because it's good to regain self-reliance of the patients.

The project’s research goals are 1) the evaluation of primary movement patterns for motor learning, 2) designing an informative acoustic feedback, 3) testing its consciously as well as unconsciously achieved efficiency when performing grasping movements and 4) the design and evaluation of a low power, low latency hardware platform for the inertial sensor data processing and sound synthesis.

Sonification based motor learning research

Sonification based on human movement data is efficient on healthy subjects in enhancing motor perception as well as motor control and motor learning: Perception of gross motor movements became more precise, motor control got more accurate and variance was reduced [2] and motor learning was accelerated [3]. Benefits are elicited by tuning in additional audiomotor as well as multisensory functions within the brain [4], obviously independent of drawing attention to sonification. Here we optimize 3D-movement sonification for basic movement patterns of the upper limbs to support relearning of everyday movement patterns in stroke rehabilitation. While using movement data directly for 3D-sound modulations a high degree of structural equivalence to visual and proprioceptive percepts is achieved. Whereas music movement sonification of the co-project group yielded on conscious processing and music like sonification, movement data defined sonification described here can get effective without drawing attention to it. For stroke rehabilitation a synthesized sonification merging musical and movement data defined components could be used.

Acoustic Feedback Design

The acoustic feedback should be easily understandable and agreeable to be helpful in stroke rehabilitation, meaning there should be a clear and easily learnable correspondence of limb positions to a three-dimensional sound-mapping. This will be reached via a sonification, which basically has the same features as real acoustic instruments. These features will then be connected to the three-dimensional movement-trajectories and can then be played in real-time only by movements of a limb. Furthermore the sound design of this sonification should be convenient. Only then positive emotional valence and joy while playing the instrument can be elicited.

In previous studies we could demonstrate that music supported training (MST) in stroke patients facilitates rehabilitation of fine motor skills of the paretic hand. [5, 6, 7] About 6 weeks after the stroke, patients were asked to replay simple tunes on a keyboard or on an electronic set of drumpads and were trained to systematically increase their sensory-motor skills during a 3 weeks training period. The recovery of motor skills was more pronounced than in a control group undergoing constraint-induced therapy (CIT). Extending this study, we now aim to reproduce these effects in patients using real time feedback of reaching-movements of the arm in space.

Hardware Architecture Design

A key research area is the design of a portable hardware demonstrator for usage in stroke rehabilitation. Technical requirements are low latency processing, low power consumption and small form factor to enable portable usage in home based rehabilitation sessions. The overall hardware and software system latency constraint was 30 ms, as higher values result in latency significantly recognizable and confusing for humans [8].

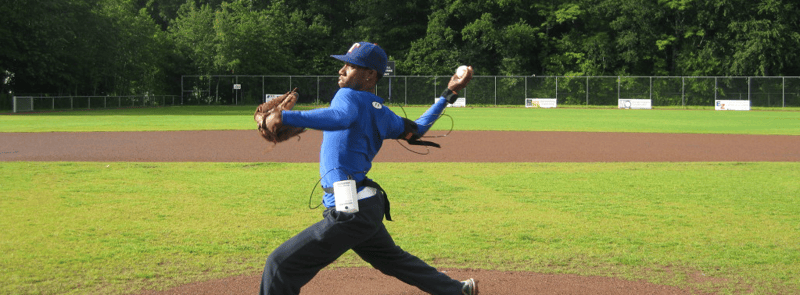

To allow the capturing of complex arm movements, like drinking or tooth brushing, a design goal of the PC based demonstrator and hardware demonstrator is to support up to ten MTx sensors and an Xbus Master device. For basic grasping tasks the inertial sensor system set up can be chosen according to [9], with one sensor at upper arm and one sensor attached to forearm. In [9] sonification acoustically displays the wrist position, captured by inertial sensors. This provides information about grasping movement performance.

Using movement sonification in sports or rehabilitation requires completely mobile and portable sonification systems. Depending on the selected mapping parameters, sample based sound synthesis gets quite computational intensive. Therefore, power demanding processors are required. PC based hardware platforms [10], [11] require a high power budget and are limited to stationary usage.

This project group explores hardware platforms for real time sonification of complex movements captured by inertial sensors. The system has to provide flexibility to scale the number of MTx sensors according to motion capturing demands. The generated stereo audio signal will be transmitted by speakers. The basic system structure is shown in Figure 1. Sensor data acquisition, sonification parameter calculation and audio synthesis are handled on the hardware platform with the attached motion capture system [12].

Corresponding paper:

Real-time low latency movement sonification in stroke rehabilitation based on a mobile platform

Author: Brückner, H. P.; Theimer, W.; Blume, H.

Consumer Electronics (ICCE), 2014 IEEE International Conference on, 264 - 265, 2014

DOI: 10.1109/ICCE.2014.6775997

Link

Corresponding Project Groups

Sports Science

Institute of Sport Science, Leibniz University Hanover, Alfred Effenberg

Email: Alfred Effenberg

Web: www.sportwiss.uni-hannover.de

Music Physiolo gy and Musician's Medicine

Institute for Music Physiology and Musician's Medicine University for Music, Drama, and Media, Hannover

Eckart Altenmüller

Email: Eckart Altenmüller

Web: www.immm.hmtm-hannover.de

Hardware Architecture Design

Institute of Microelectronic Systems

Leibniz University Hannover

Holger Blume (Corresponding author)

Email: Holger Blume

Web: www.ims.uni-hannover.de

References

[1] G. Kramer, An introduction to auditory display, Addison Wesley Longman, 1992.

[2] A. O. Effenberg, “Movement sonification: Effects on perception and action,” IEEE Multimedia, vol. 12, no. 2, pp. 53-59, 2005.

[3] A. O. Effenberg, U. Fehse and A. Weber, “Movement sonification: Audiovisual benefits on motor learning,” BIO Web of Conferences, vol. 1, no. 00022, pp. 1-5, 2011.

[4] L. Scheef, H. Boecker, M. Daamen, U. Fehse, M. W. Landsberg, D. O. Granath, H. Mechling and A. O. Effenberg, “Multimodal motion processing in area V5/MT: Evidence from an artificial class of audio-visual events,” Brain Research, vol. 1252, pp. 94-104, 2009.

[5] S. Schneider, P. W. Schönle, E. Altenmüller and T. F. Münte, “Using musical instruments to improve motor skill recovery following a stroke,” J Neurol, vol. 254, pp. 1339-1346, 2007.

[6] E. Altenmüller, S. Schneider, P. W. Marco-Pallares and T. F. Münte, “Neural reorganization underlies improvement in stroke induced motor dysfunction by music supported therapy,” Ann NY Acad Sci, vol. 1169, pp. 395-405, 2009.

[7] S. Schneider, T. F. Münte, A. Rodriguez-Fornells, M. Sailer and E. Altenmüller, “Music supported training is more efficient than functional motor training for recovery of fine motor skills in stroke patients,” Music Perception, vol. 27, pp. 271-280, 2010.

[8] D. Levitin, K. MacLean, M. Mathews, L. Chu and E. Jensen, “The perception of cross-modal simultaneity,” International Journal of Computing Anticipatory Systems, 2000.

[9] H.-P. Brückner, C. Bartels and H. Blume, “PC-based Real Time Sonificatio of Human Motion Captured by Inertial Sensors,” The 17th Annual International Conference on Auditory Display, 2011.

[10] T. Hermann, O. Höner and H. Ritter, “AcouMotion--An Interactive Sonification System for Acoustic Motion Control,” Gesture in Human-Computer Interaction and Simulation, pp. 312-323, 2006.

[11] K. Vogt, D. Pirrò, I. Kobenz, R. Höldrich and G. Eckel, “PhysioSonic - Evaluated Movement Sonification as Auditory Feedback in Physiotherapy,” Auditory Display, pp. 103-120, 2010.

[12] H.-P. Brückner, M. Wielage and H. Blume, “Intuitive and Interactive Movement Sonification on a Heterogeneous RISC / DSP Platform,” The 18th Annual International Conference on Auditory Display, 2012.

Acknowledgement

We would like to thank the European Union (EU) for financially supporting this research as project W2-80118660 within the European Fond for regional development (EFRE).